- Blog

- Wod virtues and vices list

- Remote for nikon p900 how to connect

- Pdp xbox one controller cord

- Rotate canvas photoshop cc

- Easybcd alternative

- Rihanna rehab lyrics ft justin timberlake

- Customized girls fight androoid

- Veeam backup service not starting

- Varutha padatha valibar sangam movie online hd

- Greek question mark vim

- Static sound on storch vst

- Where to watch anime

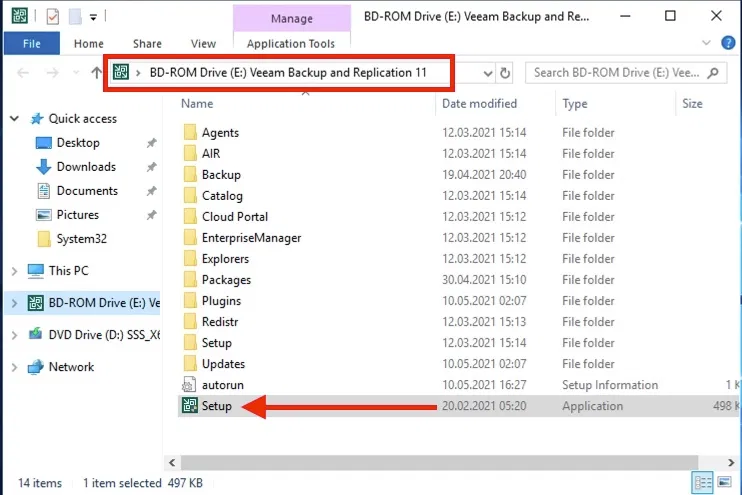

12:08:36 PM - Windows Search service was stopped, we will start Now when you run the job you will see in the log it running the post-thaw event and the output come across to your slack channel 12:08:35 PM - Xbox Live Auth Manager service was stopped, we will start Upload the script to a location on your B&R server, something like c:\Scripts\ApplicationAwareProcessingįollow the steps here from Veeam (remember to put your script on the B&R Server) Now you have the script you can configure it on your backup job under the application settings. $servicesTimeout = default amount of time you want the desired service state.$servicesToMonitor = string array of service display names.$slackChannel = which channel you want to have the both post to.$slackKey = you slack generated webhook id.$slackBotName = the name of the bot posting to Slack.One is for Slack if you want to use that and the other for the services you wish to monitor. I have defined a config section at the top allowing for it to easily be adapted. If service not found then it logs this as a warning stating the service was not found on the system.If state is not STOPPED or RUNNING then logs warning to be checked out as its likely in a bad state (stuck in stopping or starting), needs a human to check it out.If state = RUNNING, leaves everything as-is, logs result.If state = STOPPED, starts the service, throws warning to log if unable to start.Logs results and status to a Slack channel so the application team can monitor easily.Loops through and checks the status of each of them and performs an appropriate action.Takes an array list of service names to monitor.This post is about the script I put together to check server health which is triggered by Veeam Backup and Replication Server on the post-thaw event. Most often caused around the time the virtual machine was backed up, in particular when the snapshot was taken or committed as part of this VM backup which is pretty common. I came across an set of application servers in an environment which were not fault tolerant at all to service disruption at all.